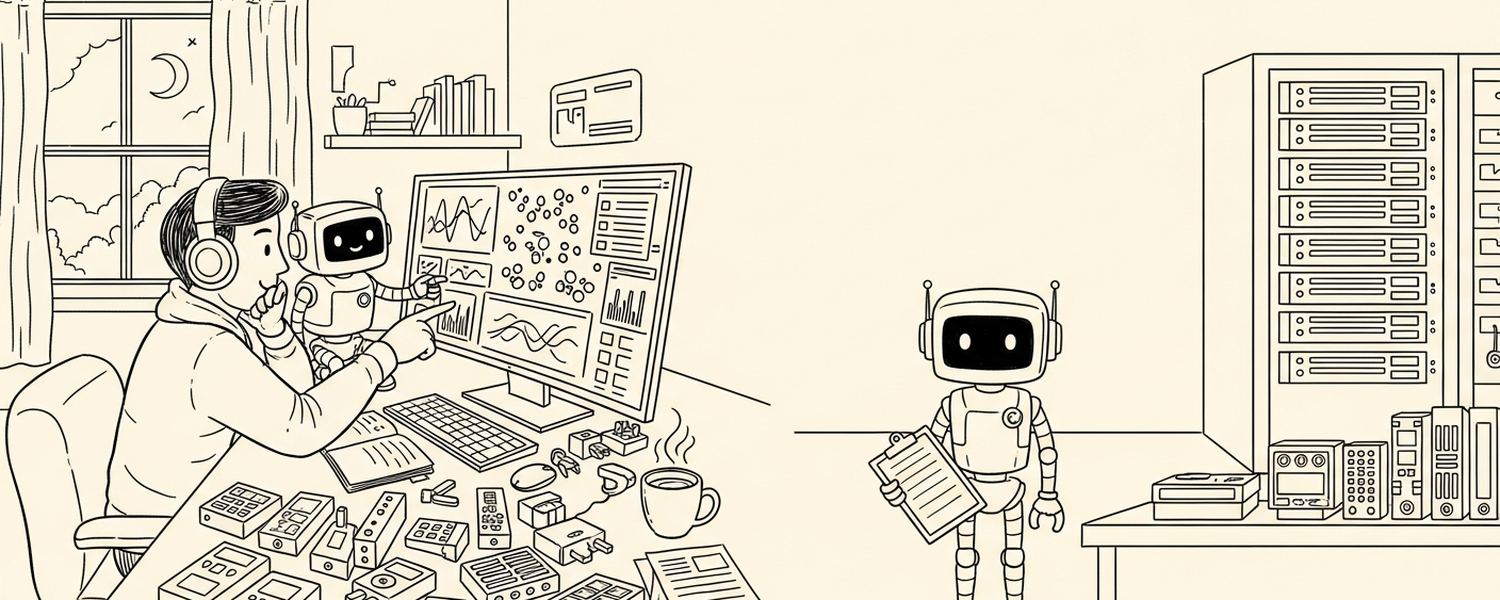

Step back to what the customer actually wants when things break, and the answer is short — service works, fix is fast, customer informed, no repeats. PagerDuty, Grafana, Datadog, Sentry, Slack — each does its job, and each does it well. But those are the team’s tools, not the customer’s vocabulary.

The implementation reflects a specific constraint: every layer of the existing stack was designed around what humans need to do incident response. When the responder changes from human to agent, the right question isn’t “what does each tool become.” That’s tool-first thinking, and it accidentally preserves the existing shape. The cleaner question is layer by layer: does this layer’s function exist for the customer, or does its form exist because humans need it? Functions survive — customers are permanent. Forms are up for renegotiation when the actor doing the work isn’t a human anymore.

...