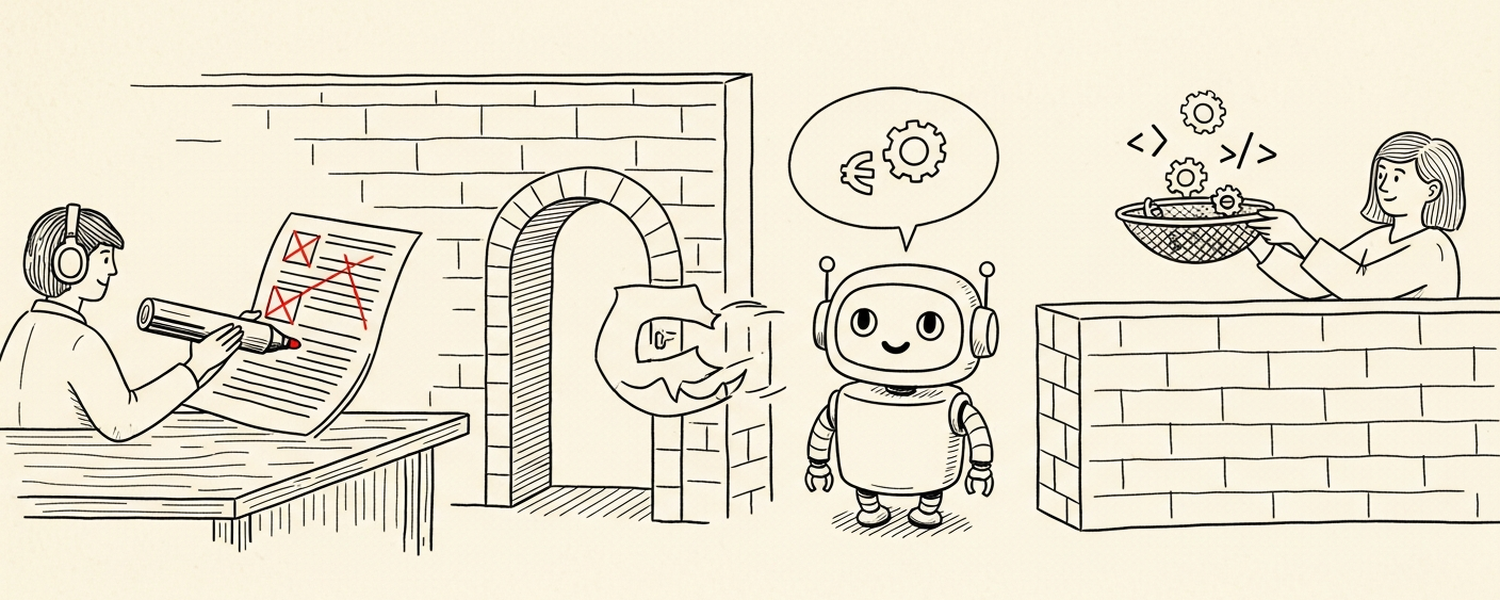

Two Boundaries: Redact Before You Prompt, Sanitize Before You Render

The LLM sits between two boundaries you have to defend. On the input side, treat it as an untrusted destination for sensitive strings. On the output side, treat what it produces as user-input-controlled — because prompt injection makes it so. The LLM is not a trusted insider. Most threat models for LLMs in production treat the model as the security boundary: “the prompt says don’t leak the API key.” That’s a wish, not a control. The real boundaries are around the model — at the data going in and the data coming out. ...